How Do We Know What's Real Anymore?

With AI and deep fakes, anything is possible. Crowd-sourced reality. Blockchain verification.

With a new war starting in the Middle East, many are questioning the graphic and disturbing images being shared on social media. Real? Or fake? AI generated, possibly?

It’s my opinion that modern AI tools are not quite good enough yet to create realistic looking video that contains a lot of complexity, such as a group of people engaged in combat. At least, commercially available AI tools aren’t that good yet. (Who knows what secret government agencies have access to!)

After all, current AI image generators are notorious for getting simple things like fingers wrong.

Sure, AI works great for imaginary sexpots on OnlyFans. But I would guess that those digital models were worked on and polished up by humans before being placed into exotic digital environments - in other words, it’s a lot of CGI work.

CGI isn’t even that realistic still - we can usually sense that something is slightly fake - notably, the younger Indiana Jones in The Dial of Destiny - we all noted how the lighting was a little darker to hide the CGI and it was still obvious despite a $295 million budget.

But with the combination of AI and CGI, any sort of video “footage” could be created for someone with enough money, time, and resources.

Will this happen in wars, where events are happening quickly and the time to release a shocking video is immediate?

Probably, though I’m guessing that’s in the near future and not necessarily right this second, but I could be wrong.

Either way, we’re looking at a crisis in confidence, as people are already so skeptical that they don’t believe news reports about what’s going on in the world because “I’ll believe it when I see it” can no longer be trusted.

What will be the answer to this?

Well, back in the day, before we had photographs and video, we relied on reporters and historians to tell us what happened.

Relying on reporters won’t solve the problem though, with trust in news media waning in favor of social media.

Tools exist that claim to be able to tell whether an image was generated by an AI or a human, but they don’t work and get false results. Users have additionally reported that changing the type of image from jpg to png can additionally change the result. (You can see examples here but be forewarned they include the image of a burnt baby.)

This image is not AI. The user took a photo of a pen on their desk with a phone, and the image was inaccurately labeled as generated by AI:

So even AI tools can’t tell what’s AI. But these same tools are being used to claim that real image are fake. It’s a mess.

I predict a few things will develop in response to issue of possible AI “deep fakes” - first, we’ve already seen an increase in organizations claiming to be the arbiters of “truth” - though unfortunately, as Matt Taibbi has fearlessly reported, these organizations appear to be more interested in censorship than truth. The “censorship industrial complex” will be fed by AI, which will be used to justify clampdowns on free speech and image sharing.

Recently, the EU pressured X / Twitter to remove “illegal content & disinformation” from the platform.

Unfortunately, “violent” content may include coverage of what happens in an attack or war, and many believe strongly that citizens have a right to see what really happened in order to make more informed judgments on issues.

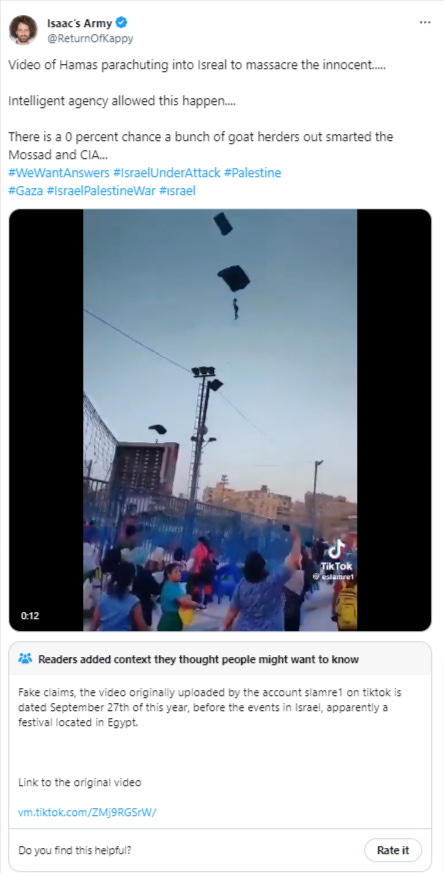

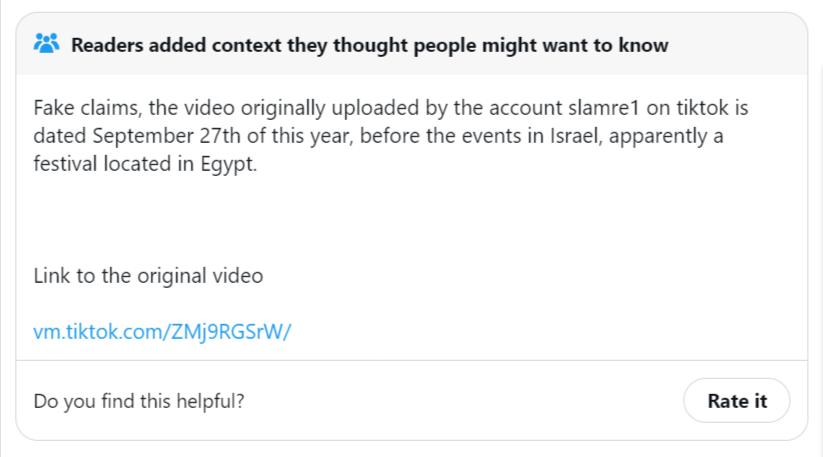

But further problems occur when old or fake footage gets mixed up with real footage, muddying the waters.

X now uses Community Notes to help discover and highlight misleading posts, such as this one that mistakes an old parachute video from Egypt with the music festival attacked by Hamas:

I think Community Notes is a great way to moderate. It puts the power in the hands of everyday people instead of unaccountable Big Tech companies or government agencies.

I would be curious to see if some sort of crowd sourcing could be used to verify reports of what’s happening in real time. Sort of like the earthquake heat maps used by the USGS. You login and report what YOU saw in your area, when an event happens. We can then collate these stories from real people to get a big picture narrative.

However, it is more likely that the governments around the world are going to clamp down even harder on free speech under the guise of stopping “misinformation.”

AI will be used to do this and weed out stories before we have a chance to see them.

I also predict that blockchain technologies will be used to create and then verify images and videos - in other words, the blockchain ledger will have a record of WHEN the image was created and how, and each and every edit will be embedded in the file so that it can be tracked and verified.

What are your thoughts? Please share them below:

Things Are & Are Not as they Seem at the Same Time. Focus Domestically FIRST, imo.

https://genearly.substack.com/p/top-down-bottom-up-inside-out-shoulda?sd=pf